API governance is one of those disciplines everyone agrees matters — but too many organisations still handle it too late.

Teams often move from idea to technical design before they have answered a simpler question: what business change is this API really enabling, and who will feel the impact? By the time that question is asked, governance has already become a delay.

That model does not scale.

If organisations want faster delivery without losing control, they need to move API governance upstream. And increasingly, that means using AI not as a replacement for architects, but as a force multiplier for governance.

The real problem with API governance

The issue is not a lack of standards.

Most enterprises already have OpenAPI guidelines, security rules, naming conventions, approval forums, and architecture review processes. The problem is that these controls are often applied inconsistently, manually, and too late in the lifecycle.

That creates predictable pain:

- Teams skip the business impact step and go straight to technical design.

- Reviewers spend time rediscovering the same issues.

- Governance calls become debate sessions instead of decision sessions.

- Changes that should take hours end up taking weeks.

- Standards exist, but enforcement depends on people remembering them.

In practice, this turns API governance into a bottleneck rather than a safeguard.

A better workflow

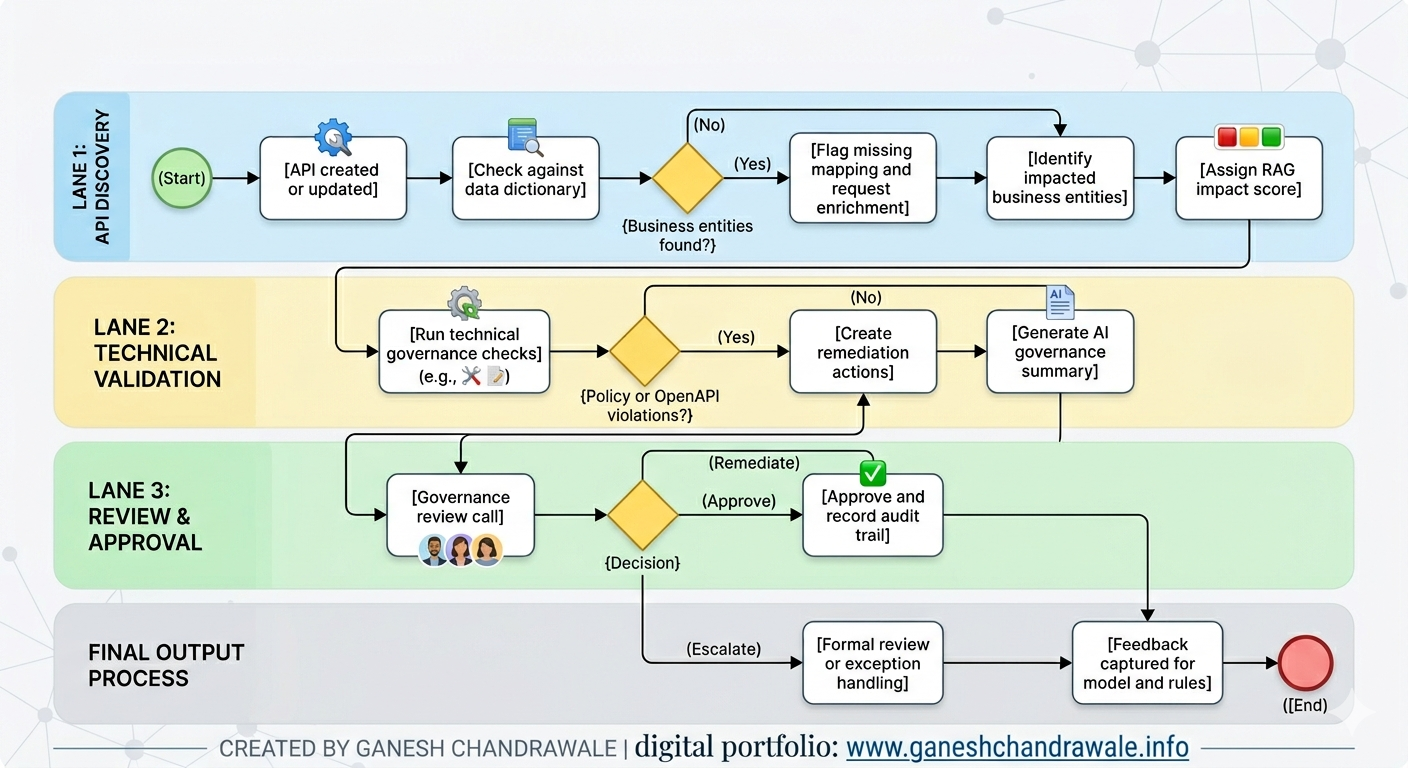

A more effective model is to treat API governance as a repeatable workflow, not a one-off review.

1. API change is created or updated

A new API or API change is submitted through a portal, repository, or request workflow. At this point, the system should capture both the technical specification and a short business description of the change.

2. Business entity mapping happens first

Before the team dives into technical design, the API is checked against the enterprise data dictionary or business entity catalogue. This identifies which business entities, domains, customers, processes, or systems are affected.

This step is often missed, but it is essential. If you do not know what business concepts an API touches, you cannot properly assess impact.

3. Impact is classified using RAG

The change is scored using a simple RAG model:

- Green: low risk, backward compatible, limited impact.

- Amber: moderate impact, needs review or minor remediation.

- Red: high risk, breaking, regulated, or cross-domain.

This gives stakeholders a quick view of the likely business and technical impact without forcing them to read every line of the spec first.

4. Technical governance checks are applied

The API is checked against enterprise policy and technical standards, such as:

- OpenAPI compliance.

- Naming and tagging standards.

- Required security controls.

- Error handling conventions.

- Versioning and deprecation rules.

- Organisation-specific API guidelines.

This is where policy-as-code and linting become valuable. Rules should be enforced consistently, not interpreted differently by every reviewer.

5. AI generates the review pack

Instead of expecting architects and stakeholders to manually assemble the review materials, AI can summarise:

- What changed.

- Which entities are affected.

- What the business risk is.

- Which technical rules are broken or missing.

- What remediation is recommended.

- Whether the change should be approved, remediated, or escalated.

This does not replace judgment. It removes the repetitive work that slows judgment down.

6. Governance review becomes a decision point

The governance forum then reviews a concise, AI-generated impact pack rather than starting from scratch.

That means the meeting can focus on decisions:

- Is the business impact acceptable?

- Are the technical standards satisfied?

- Does this require exception approval?

- Can it be fast-tracked?

7. Feedback improves the workflow over time

Every review outcome should feed back into the process.

If reviewers repeatedly flag the same issue, that rule should become automated. If a type of change consistently proves low risk, it should move into a lighter approval path. Over time, the process becomes faster, more accurate, and more trusted.

That is how you evolve from manual governance to assisted governance, and eventually to selective automation.

Why this matters now

API delivery is accelerating, but governance often is not. At the same time, organisations are under growing pressure from security, compliance, interoperability, and platform complexity.

This makes API governance more important, not less.

The organisations that get this right will reduce review cycle time, improve API quality, increase consistency, and lower operational risk. They will also make it easier for developers to do the right thing early, rather than forcing them to fix issues late in the process.

That is a real business advantage, not just a technical one.

What organisations struggle with today

In most enterprises, the governance struggle is not a lack of intent. It is a lack of operating discipline.

Typical issues include:

- No single source of truth for business entities.

- Inconsistent API design standards across teams.

- Governance reviews that happen too late.

- Too much dependence on manual review.

- Poor visibility of downstream impact.

- Approval cycles that are driven by meetings rather than evidence.

- Standards that exist in documents but are not embedded into delivery.

These are exactly the kinds of issues that make API governance feel slow, frustrating, and expensive.

Where the quick wins are

The good news is that organisations do not need a massive transformation programme to improve.

A few low-investment changes can deliver immediate value:

- Introduce a shared business entity catalogue.

- Require every new API or change request to map to business entities.

- Add OpenAPI linting into the CI/CD pipeline.

- Add breaking-change detection to pull requests.

- Use a standard RAG-based impact rubric.

- Generate governance pre-reads automatically.

- Keep the governance forum focused on exceptions and decisions.

These improvements are relatively low cost because they mostly use existing repositories, standards, and review processes. The main effort is in defining the workflow clearly and sticking to it consistently.

What exists in the market

There are tools that support parts of this pattern, but very few that deliver the full workflow end to end.

Some platforms are strong at API design and governance rules. Others focus on runtime visibility, linting, lifecycle automation, or breaking-change detection. Some newer products are adding AI-assisted review and compliance features.

But the specific combination of:

- business entity mapping,

- AI-generated impact assessment,

- RAG scoring,

- technical policy validation,

- and governance approval workflow

is still more of a custom enterprise capability than a packaged standard.

That is important.

It means there is still room for organisations to design a governance approach that fits their business rather than accepting a generic platform workflow that only solves part of the problem.

The maturity path

A sensible roadmap looks like this:

Phase 1: Human-assisted governance

AI helps prepare summaries and identify issues, but humans make all decisions.

Phase 2: Hybrid governance

Rules, linting, and impact scoring run automatically. Humans review only exceptions and higher-risk changes.

Phase 3: Selective automation

Low-risk changes can be auto-approved when they meet defined policy thresholds.

Phase 4: Embedded governance

Governance becomes part of normal delivery rather than a separate review stage.

That path is realistic, safe, and scalable.

Final thought

API governance should not be a slow administrative hurdle.

It should be a business-enabling control that helps organisations move faster with confidence. AI can help make that possible by doing the repetitive analysis work before people enter the room, so architects and stakeholders can focus on what they are actually there to do: make informed decisions.

The real opportunity is not to automate governance away. It is to make governance intelligent, repeatable, and fast enough to support modern delivery.

The views expressed in this article are those of the author and reflect independent practitioner analysis based on publicly available research and general professional experience. They do not represent the views of any employer, client, or organisation. All frameworks and patterns referenced are illustrative in nature.